Ryan Jones is a wicked smart SEO who works with SapientNitro and rants on his personal blog (dotCult) about all things SEO, Penguin, and analytics. He’s one of the most “outspoken” marketers we know and Rhea will be moderating a panel with Ryan at the upcoming SMX East conference, October 2nd-4th in Manhattan. We hope to see you there! In the meantime, Ryan shares what you should be measuring and why.

When somebody comes to me asking for data I always ask the same questions: Why? What are you going to do with it? I don’t enjoy giving people a hard time [too much] and I’m not [that] lazy. I just want to make sure that they’re asking the right questions and answering them with the right data.

If you take one lesson away from this rant, let it be this: If you’re not asking questions, you’re not providing as much value as you could be. Chances are you were hired for your expertise, experience, knowledge, and insights – not simply your ability to sort numbers in excel. The person asking you for data has expertise, experience, knowledge, and insights too – but if it’s not in your field then it’s your job to ask these questions and ensure you provide them with the right data to make the right decisions.

A good analyst needs to understand not only what they’re measuring but why they’re measuring it too. Proper analysis demands it.

It’s not enough to just measure what’s easily measureable; we also need a reason for measuring it. If there was a formula for increasing web sales our jobs would be easy, but there isn’t. The closest we come is that dreaded correlation word everybody keeps throwing around. When we apply statistics to our website data we can get an idea of things that correlate highly with sales. We can then focus on increasing or optimizing those “things.” It’s here that lots of companies run into trouble. I’d like to use hypothetical (but based on a true story) example to illustrate just what can happen when people don’t ask why.

Imagine the following scenario: (Note: I’m making this up, but I see this type of thinking all the time.)

A senior executive learns (correctly) that Youtube views correlate highly to sales.The latest correlation data says so. The executive sends out the order but only includes the ‘what’ without the ‘why.’ “Hey, get us more Youtube views. They’re important,” he bellows, and his words echo throughout the company. Wanting to impress their boss, managers incorporate Youtube views into their bonus metrics and make them a priority.

Fast forward a few weeks and suddenly paid search ads are now pointing to Youtube instead of the company website. The company homepage is driving people to Youtube instead of into the shopping cart. Product features and testimonials have been replaced with embedded Youtube videos. Youtube video descriptions now call out more videos from the company. Sales are down and mid-level managers are screaming that we obviously need more Youtube views.

It sounds absurd, but I’ve actually seen this exact scenario happen at some very large companies (thankfully, not so much within my company.) Go ahead and substitute Youtube views with facebook likes, pagerank, mozrank, alexa rank, twitter followers, retweets, reviews, ratings,plus ones, links, diggs, sphinns, or any other easily to measure number you prefer – it doesn’t change the story. Do you see the underlying issue? The problem is, it’s easy to focus so much on what to measure that we often forget about why we’re measuring it in the first place.

This is quite common in large corporations where departments are siloed and it’s easy for people to focus on their own goals rather than the company’s best interests, but it can happen within smaller companies too – usually when people stop asking “why?” (Pro Tip: your bonus is nice but your salary pays more – focus on optimizing that.)

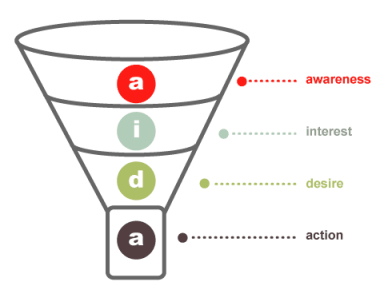

Ok, back to the story. Our executive was right. Youtube views did correlate highly with sales, but let’s remember that correlation doesn’t imply causation. (Seriously, SEOs, please understand that point.) Just because two things are correlated doesn’t mean that one causes the other. It’s important to understand why Youtube views correlate with sales. For this, we need to go back to our Marketing 101 sales funnel. It kind of looks like this:

Awareness, Interest (or consideration), Desire, Action. There’s probably 200 other versions of the sales funnel but we’ll stick with this one. I want to focus on the top of the funnel. That’s where metrics like Youtube views, Facebook likes, and even many of your advertising campaigns fall. They’re what we call “High Funnel Activities” or “HFAs” for short. The nice thing about HFAs is that they often lead to LFAs (Lower Funnel Activities.) Both HFAs and LFAs are subclasses of KPIs but we’ll cover all of that in Marketing 201 next semester. For those who are still taking Marketing 101, the marketing funnel actually works just like that funnel at the top of your beer bong; the more you fill the top the more that comes out at the bottom. Theoretically, increased awareness leads to increased action – except that didn’t happen in our above example. Why not?

In our example we were so focused on ensuring that the top of the funnel was full that we forgot about the bottom. Instead of pouring more into it, we just stuck a cork in the bottom. The top stayed full and we hit our awareness goals, but only because we trapped customers in the awareness stage and wouldn’t let them leave. In the real world, it’s quite similar to replacing the cash register with a sales pitch. Customers are standing in line with money in their hands and instead of taking it you’re telling them how awesome your product is. Stop talking and let them buy it.

It may sound extreme, but I’ll reiterate that I’ve seen several companies face this exact issue in the past. They didn’t understand why things were correlated so they couldn’t properly use that correlation to their advantage.

Analytics isn’t about compiling numbers. That’s called reporting and it can be automated (or assigned to interns.) Real analytics involve insight. Analysts need to understand not only what is happening but why it’s happening and what we should do about it. That’s called actionable reporting and if you’re interested in that you won’t want to miss the performance SEO metrics session featuring me (@ryanjones) @rhea, @vanessafox, and @statrob at SMX east next month.

- Just because something is easy to measure doesn’t mean it should be measured. Make sure you understand why you’re measuring it.

- Correlation is NOT causation. In fact, optimizing one side of the equation may actually change the correlation.

- Understand your goals first, THEN pick metrics that line up with those goals. DON’T establish goals based on metrics.

- Don’t miss the forest behind the trees. Look at all your metrics together to tell the complete story.